Concurrency in Java – Developer’s Perspective

Why Concurrency Even Matters

If you’ve been writing Java for a while, you’ve already been using threads — even if you didn’t realize it.

That main() method? It runs on a thread.

But things get interesting when your application needs to do more than one thing at a time.

Think about:

- handling multiple API requests

- processing background jobs

- sending emails without blocking the main flow

If everything runs in a single thread, your app becomes slow and unresponsive pretty quickly.

That’s where concurrency comes in.

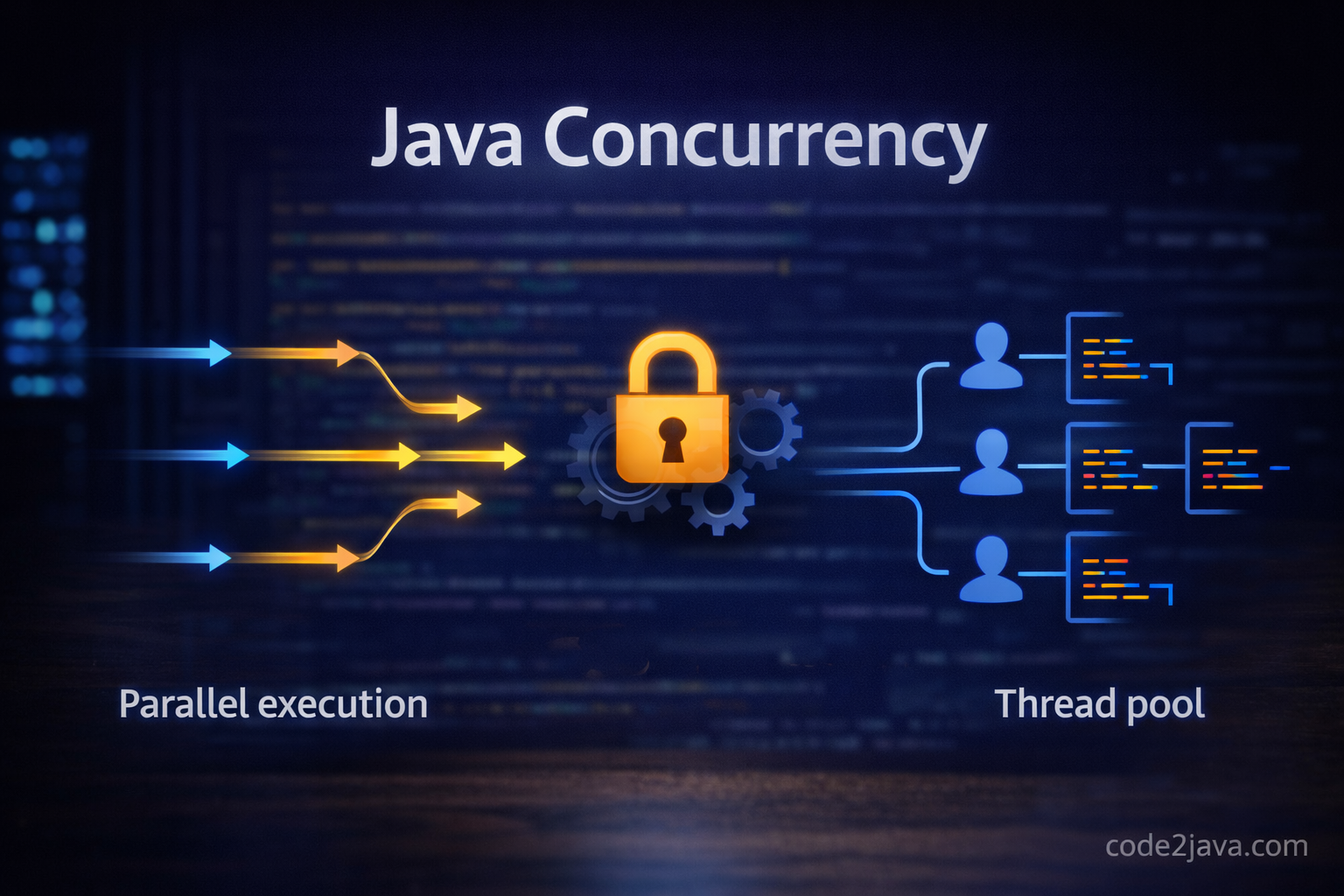

Concurrency vs Parallelism (Quick Clarity)

This confusion is very common.

- Concurrency → managing multiple tasks at once

- Parallelism → actually running tasks at the same time

You can have concurrency even on a single CPU. The system just switches between tasks very fast.

In most real-world Java applications, you’re dealing with concurrency — not true parallel execution.

Understanding Threads in Java

Everything starts with threads.

Here’s the simplest way to create one:

class MyTask extends Thread {

public void run() {

System.out.println("Running in " + Thread.currentThread().getName());

}

}

public class Main {

public static void main(String[] args) {

new MyTask().start();

}

}

Important Insight

Always call start(), not run().

Calling run() directly just executes it like a normal method — no new thread is created.

I’ve actually seen this mistake in real projects, and it’s surprisingly common.

What Happens Internally When You Call start()

This is something that makes debugging easier once you understand it.

When you call start():

- JVM requests the OS to create a new thread

- The thread moves to a runnable state

- A scheduler decides when it runs

- Eventually, your

run()method executes

👉 You don’t control when the thread runs — the scheduler does.

That’s why concurrency bugs often feel random.

Using Runnable (Better Approach)

In real applications, you rarely extend Thread.

Instead:

class MyTask implements Runnable {

public void run() {

System.out.println("Running in " + Thread.currentThread().getName());

}

}

Thread t = new Thread(new MyTask());

t.start();

Why This Is Preferred

- You can extend another class

- Cleaner design (task is separate from thread)

- Easier to manage in larger codebases

The Real Problem: Shared Data

Threads themselves are not the problem.

Shared data is.

Let’s look at a simple example:

class Counter {

int count = 0;

void increment() {

count++;

}

}

Now run this with multiple threads:

Counter counter = new Counter();

Thread t1 = new Thread(() -> {

for (int i = 0; i < 1000; i++) counter.increment();

});

Thread t2 = new Thread(() -> {

for (int i = 0; i < 1000; i++) counter.increment();

});

t1.start();

t2.start();

t1.join();

t2.join();

System.out.println(counter.count);

You expect 2000.

You won’t always get it.

Why Race Condition Happens

count++ is not a single operation.

Internally it does:

- Read value

- Increment

- Write back

Now imagine two threads doing this:

- Both read

5 - Both write

6

You just lost one update.

This is called a race condition.

Fixing It Using synchronized

class Counter {

int count = 0;

synchronized void increment() {

count++;

}

}

Now only one thread can execute this method at a time.

How synchronized Works Internally

Every object in Java has a monitor (lock).

- Thread tries to acquire the lock

- If available → proceeds

- If not → waits

- After execution → releases the lock

This ensures mutual exclusion.

Performance Trade-Off (Real Insight)

synchronized solves correctness, but:

- It blocks threads

- Too much blocking reduces performance

In high-load systems, this becomes a bottleneck.

Using Thread Pools (ExecutorService)

Creating threads repeatedly is expensive.

Instead, we reuse them.

import java.util.concurrent.*;

ExecutorService executor = Executors.newFixedThreadPool(3);

for (int i = 0; i < 5; i++) {

int id = i;

executor.submit(() -> {

System.out.println("Task " + id + " running in " + Thread.currentThread().getName());

});

}

executor.shutdown();

What’s Happening Internally

- A fixed number of threads is created

- Tasks are placed in a queue

- Threads pick tasks one by one

This is how most production systems handle concurrency.

Atomic Variables (Better for Simple Cases)

Instead of using locks:

synchronized void increment() {

count++;

}

Use:

import java.util.concurrent.atomic.AtomicInteger;

class Counter {

AtomicInteger count = new AtomicInteger();

void increment() {

count.incrementAndGet();

}

}

Why This Works Better

It uses CAS (Compare-And-Swap):

- Check current value

- Update if unchanged

- Retry if needed

No blocking. Much faster under contention.

Visibility Problem and volatile

Sometimes, threads don’t see updated values.

This happens due to CPU caching.

Fix:

volatile boolean running = true;

Important

- Ensures visibility across threads

- Does NOT guarantee atomicity

Deadlock (When Things Go Really Wrong)

Classic situation:

synchronized (lock1) {

synchronized (lock2) {

// work

}

}Another thread:

synchronized (lock2) {

synchronized (lock1) {

// stuck forever

}

}Both threads wait forever.

Application hangs.

How Your Thinking Evolves

Over time, you stop thinking:

“How do I manage threads?”

And start thinking:

- Can I avoid shared state?

- Can I use immutable objects?

- Can I use thread pools instead?

Because fewer shared variables = fewer bugs.

Final Thought

Concurrency is not hard because of syntax.

It’s hard because of timing.

Execution order is not guaranteed.

And once you accept that, you naturally start writing safer code:

- minimize shared state

- prefer high-level APIs

- avoid manual thread handling when possible

That’s when concurrency starts feeling predictable instead of scary.