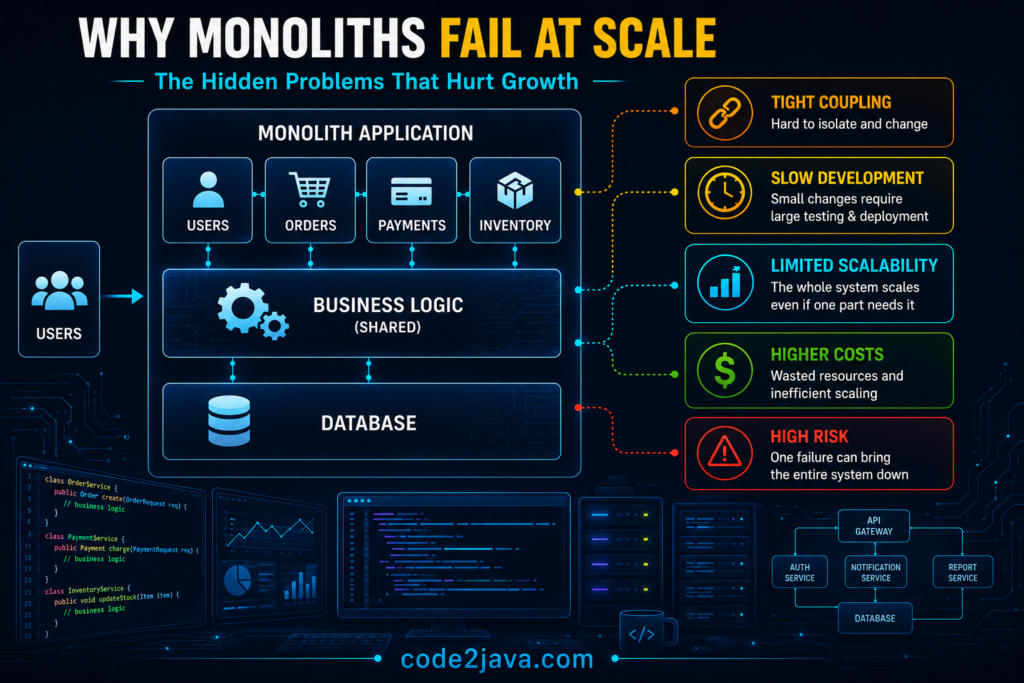

The Breaking Point — Why Monoliths Fail at Scale

1. Architectural Context

A monolith works well in the beginning because everything runs in one place – one application, one database, one transaction boundary.

When a request comes in, it is processed completely inside a single JVM. If anything fails, everything rolls back. This gives strong consistency and predictable behaviour. At this stage, the system is simple, fast, and easy to debug.

The hidden assumption is that all parts of the system can continue sharing the same runtime as load increases. This assumption does not hold at scale.

2. Shared Runtime Becomes a Problem

As the system grows, it starts doing more work at the same time. User requests run alongside background jobs like reporting, syncing, or batch processing. All of these use the same CPU, memory, and threads. The JVM does not prioritize business logic. It treats all workloads equally.

As background work increases, memory usage grows and garbage collection runs more often. When GC runs, it pauses all threads. This causes random delays in user requests. The system still works, but response time becomes inconsistent.

3. Scaling Resources Does Not Fix It

Adding more CPU or memory helps temporarily, but the problem comes back. The issue is not lack of resources. It is that everything is sharing the same resources. More memory reduces how often GC runs, but increases how long it pauses. More CPU adds capacity, but threads still compete.

The system becomes harder to predict as load increases.

4. Database Becomes a Bottleneck

All operations in a monolith go through the same database. At high traffic, multiple requests try to update the same data. The database locks rows to maintain consistency.

Reference: https://docs.oracle.com/en/database/oracle/oracle-database/19/cncpt/transactions.html

As locks increase:

- Transactions wait

- Execution slows down

- Throughput drops

The system becomes slow not because it cannot process requests, but because requests are waiting.

5. Thread Blocking Limits Throughput

Each request uses one thread from start to finish.

When the database is slow or locked, threads wait. While waiting, they still occupy system resources.

As more threads wait:

- Thread pools fill up

- New requests get delayed

- Latency increases

Eventually, the system cannot accept new requests quickly, even if CPU is available.

6. Deployment Becomes Risky

In a monolith, everything is deployed together. Even a small change requires restarting the entire application.

When the system restarts:

- Caches are cleared

- Connections reset

- Load on the database increases temporarily

This causes short-term instability.

The bigger issue is that every change affects the whole system. This increases risk and slows down releases.

7. Team Scaling Becomes Difficult

As more teams work on the system, they all share the same codebase and release cycle. Changes need coordination. Testing becomes complex because everything is connected. Over time, development slows down because the system is tightly coupled.

8. The Real Breaking Point

At this stage, the system has been optimized in many ways:

- JVM tuning

- Database tuning

- Infrastructure scaling

But problems still remain:

- Latency is inconsistent

- Throughput does not scale well

- Deployments are risky

The root issue is not performance tuning. It is that everything is tightly connected:

- Same runtime

- Same database

- Same deployment

This coupling limits how far the system can scale.

9. From Production Perspective

Assume in high-traffic system, one can improve performance multiple times through tuning. Each change may help slightly, but problems return back under load.

One can analyse threads behaviour, GC logs, and database locks, and most of the times the clear picture that comes out is: The system was not inefficient. It was constrained by design. All parts were sharing the same resources, and that became the limit.

10. Summary

A monolith works well when the system is small and controlled.

At scale, it starts facing issues because everything is shared:

- Runtime resources create contention

- Database becomes a bottleneck

- Threads get blocked

- Deployments affect the entire system

These problems make the system unpredictable under load. That unpredictability is the real breaking point.