Security in Microservices — Why Security Becomes Harder in Distributed Systems

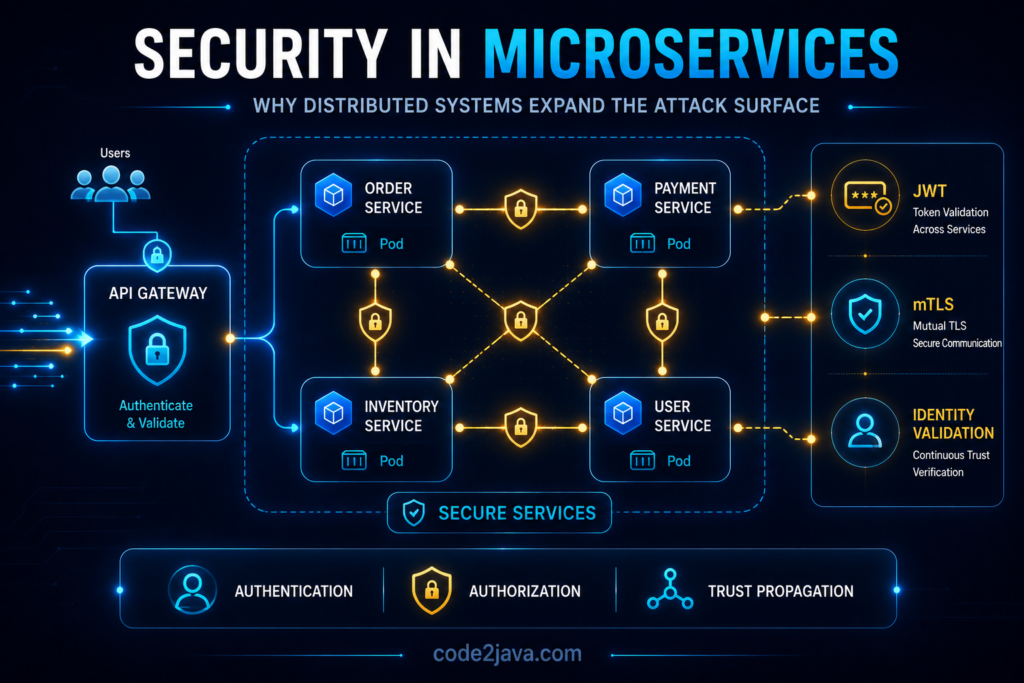

1. Why Security Changes Completely in Distributed Systems

Security inside monolithic applications is usually concentrated around a few controlled entry points. Most communication happens internally within the same runtime process, which naturally limits exposure. Teams primarily focus on securing external APIs, authentication layers, and database access because internal execution paths are considered trusted by default.

Microservices fundamentally change that assumption.

As systems become distributed, communication that previously happened inside one process now travels continuously across the network. Services exchange requests through APIs, message brokers, gateways, proxies, and asynchronous event streams. Every service boundary becomes a network boundary, and every network boundary introduces potential exposure.

This creates a major shift in how architects must think about security.

The problem is no longer limited to protecting external traffic. The architecture itself now contains dozens or even hundreds of internal communication paths that must be validated continuously. Authentication, authorisation, encryption, identity propagation, and traffic policies become runtime responsibilities spread across the entire platform.

The system gains scalability and flexibility, but it also expands the operational attack surface dramatically.

2. Internal Traffic Is No Longer Automatically Safe

One of the most dangerous assumptions teams carry from monolithic systems into microservices is the belief that internal traffic can still be trusted implicitly.

Inside monoliths, modules communicate through local method calls within the same runtime. Since execution remains inside one application boundary, developers rarely think about authenticating communication between internal components.

Microservices remove that safety boundary entirely.

Every service interaction now crosses infrastructure layers such as load balancers, service meshes, Kubernetes networking, ingress controllers, and proxies. Requests move across dynamically changing infrastructure where workloads may shift continuously between nodes and clusters.

At that point, internal traffic becomes just as important to secure as external traffic.

A compromised service may attempt to access neighboring services laterally. Misconfigured APIs may expose sensitive internal operations unintentionally. Excessive permissions may allow one service to interact with systems it should never reach.

This is why modern distributed architectures increasingly adopt zero-trust principles.

Instead of assuming internal communication is trusted automatically, the platform continuously validates every request regardless of where it originates.

3. Service-to-Service Authentication Becomes a Core Runtime Requirement

As microservices architectures grow, authentication stops being a user-only concern.

Services themselves must authenticate continuously with one another while processing traffic. APIs, schedulers, event consumers, asynchronous processors, and background jobs all participate in machine-to-machine communication at extremely large scale.

This introduces a completely different category of operational security. The challenge is no longer validating human users occasionally. The system must validate service identities constantly while maintaining low latency and high throughput across distributed infrastructure.

In production environments, this usually relies on mechanisms such as:

- JWT tokens (JSON Web Tokens) → Compact signed tokens used to securely carry user identity and authorisation information between distributed services.

- OAuth2 flows → Standard authentication and authorisation processes that allow applications to securely access resources on behalf of users.

- mTLS certificates (Mutual TLS) → A security mechanism where both client and server verify each other’s identity using certificates before communication begins.

- Workload Identities → Cloud-native identities assigned directly to applications or workloads so they can securely access resources without hardcoded credentials.

- Service Accounts → Dedicated non-human identities used by applications or services to authenticate and interact securely with other systems or infrastructure.

However, the complexity grows rapidly because every new service introduces additional trust relationships.

A small distributed platform may contain manageable communication paths. Large-scale systems quickly evolve into environments where hundreds of services continuously exchange authenticated traffic across multiple infrastructure layers.

Without strong service authentication, the architecture becomes vulnerable to lateral movement attacks where one compromised service gains unauthorized access to other internal systems.

At scale, identity management becomes one of the most important runtime capabilities in the entire platform.

4. JWT Propagation Looks Simple Until Systems Grow

JWT-based authentication became extremely popular in distributed systems because it allows identity information to travel across services without centralised session management.

Initially, this model feels elegant.

A user authenticates once, receives a token, and downstream services use that token for authorisation decisions while processing requests. The architecture becomes stateless, horizontally scalable, and easier to distribute across infrastructure.

Operationally, the reality becomes more complicated over time. As requests move across multiple services, tokens get forwarded repeatedly through internal APIs. Different services often interpret permissions differently depending on business responsibilities. Over time, authorisation behaviour may become inconsistent even though identity validation itself remains technically correct.

Another challenge appears when tokens accumulate excessive contextual information. Teams often begin adding permissions, claims, tenant information, feature flags, and metadata into JWT payloads. Eventually, tokens become operationally heavy and difficult to manage consistently.

The complexity increases further during asynchronous processing.

Events may continue processing long after the original authentication lifecycle has expired. Services must now decide whether authorization should rely on original user context, service identity, or newly generated runtime credentials.

JWT propagation simplifies some scaling concerns, but it also introduces hidden complexity around distributed authorization consistency.

5. API Gateways Become Central Security Control Points

As microservices architectures expand, organisations typically introduce API gateways to centralise external traffic handling. Gateways provide a controlled entry layer between external consumers and internal services. They usually handle concerns such as authentication, SSL termination, rate limiting, routing policies, and traffic filtering.

This creates important operational advantages. Instead of exposing every service publicly, teams centralise many security responsibilities at one managed entry point. The platform gains more consistent authentication enforcement, traffic visibility, and request control mechanisms.

However, API gateways do not solve distributed security completely. Many organisations mistakenly assume that once traffic passes through the gateway, internal systems become inherently safe. In reality, compromised services inside the platform may still interact with neighbouring services unless deeper runtime protections exist.

Gateways reduce exposure at the perimeter, but distributed architectures still require strong internal authorisation boundaries. Security cannot remain concentrated only at the edge once systems become highly distributed internally.

6. Secrets Management Becomes an Operational Challenge

Distributed systems depend heavily on secrets. Every service may require access to:

- database credentials

- API keys

- encryption certificates

- authentication tokens

- cloud credentials

- messaging platform secrets

Inside smaller systems, managing these secrets manually may appear manageable initially. As microservices environments grow, the operational burden increases dramatically.

Hardcoding credentials inside applications quickly becomes dangerous. Shared secrets across multiple services create large blast-radius risks during compromise. Manual secret rotation becomes operationally unreliable at scale.

This is why modern distributed platforms rely increasingly on centralized secrets management systems such as:

- Vault

- Kubernetes Secrets

- AWS Secrets Manager

- cloud-native key management platforms

Even with centralised tooling, operational complexity remains significant –

- Certificates expire unexpectedly

- Rotation procedures fail

- Permissions change over time

- Misconfigured access policies expose secrets unintentionally across services

In real production environments, security failures often emerge from operational inconsistency rather than weaknesses in encryption itself.

Managing secrets safely becomes an ongoing infrastructure discipline rather than a one-time configuration task.

7. Service Meshes Add Security at the Infrastructure Layer

As distributed systems become larger, organisations increasingly adopt service meshes such as Istio or Linkerd to standardise communication security across the platform.

Service meshes move security concerns away from application code and into the infrastructure layer itself.

This allows the platform to enforce capabilities such as:

- mutual TLS

- encrypted service communication

- traffic policies

- workload identity validation

- authorization enforcement

Operationally, this creates stronger consistency because services no longer implement communication security independently.

However, service meshes introduce another important trade-off. The networking layer becomes significantly more abstract.

Requests now flow through sidecar proxies before reaching actual services. Traffic visibility improves, but debugging becomes more complicated because engineers must now reason about both application behaviour and infrastructure-level proxy behaviour simultaneously.

This pattern appears frequently in distributed systems. Security improves, but operational complexity grows alongside it.

8. Zero Trust Becomes Necessary at Scale

Traditional enterprise security models relied heavily on perimeter-based protection. Once traffic entered the trusted internal network, systems generally assumed communication inside the environment remained safe.

Microservices architectures break that assumption completely.

Infrastructure becomes dynamic. Containers move continuously across clusters and nodes. Cloud networking changes constantly. Workloads scale automatically based on production demand.

Defining a static trusted internal boundary becomes extremely difficult. This is why zero-trust architecture becomes increasingly important in modern distributed systems.

Zero trust assumes no request should be trusted automatically, even when communication originates internally. Every service interaction requires continuous verification of identity, authorization, and security policy enforcement.

Operationally, this introduces additional overhead because authentication and authorization checks now happen everywhere throughout the system. However, it also significantly reduces the ability of attackers to move laterally across the architecture after initial compromise.

At scale, distributed trust validation becomes more reliable than relying on static infrastructure boundaries alone.

9. Security Failures Usually Begin with Misconfiguration

One of the most important realities in distributed systems security is that major incidents rarely happen because encryption algorithms fail. Most production security failures begin with operational mistakes.

Examples include:

- exposed internal APIs

- overly permissive IAM policies

- leaked credentials

- insecure gateway rules

- weak service permissions

- improperly configured Kubernetes networking

As systems grow, the number of configuration surfaces increases rapidly.

Every service introduces deployment settings, network policies, secrets, access rules, runtime identities, and infrastructure permissions. Maintaining consistency across these layers becomes extremely difficult manually.

This is why mature engineering organizations invest heavily in:

- policy automation

- infrastructure validation

- continuous compliance checks

- centralized identity management

- runtime security enforcement

At large scale, security becomes deeply connected to operational discipline and platform governance.

10. Security Becomes a Runtime Architecture Concern

From a production perspective, security in distributed systems is no longer limited to application code. It becomes a runtime architecture problem involving infrastructure, orchestration, networking, identity management, and operational policy enforcement simultaneously.

Every service interaction participates in the security model. Authentication flows through APIs continuously. Secrets move across deployment pipelines. Authorization decisions happen dynamically under production traffic conditions.

What makes distributed security difficult is not simply the number of technologies involved, the real challenge is maintaining consistent trust boundaries while the runtime environment itself changes constantly underneath the platform.

Stable security architectures are not built by adding isolated protections randomly. They are built by designing systems where identity, trust, authorization, and encryption remain consistent across the entire distributed runtime environment.

That consistency becomes one of the defining characteristics of mature distributed platforms.

Summary

Microservices architectures dramatically expand the security responsibilities of distributed systems.

Communication that once remained internal now moves continuously across APIs, proxies, gateways, orchestration layers, and dynamically changing infrastructure. Authentication, authorization, secrets management, encryption, and trust validation all become distributed runtime concerns instead of centralized application concerns.

As systems scale, organizations must continuously secure:

- service-to-service communication

- distributed identity propagation

- runtime infrastructure

- secrets management

- traffic policies

- authorization boundaries

Zero-trust models, service meshes, API gateways, and centralised secrets platforms help solve these challenges, but they also introduce additional operational complexity.

Understanding these trade-offs becomes essential because modern distributed systems are no longer secured by network boundaries alone.

They are secured by continuously validating trust across every layer of runtime behaviour.