If you’ve worked with backend systems, you’ve already used concurrency—whether you realized it or not.

But most developers face this gap: They know the terms, but don’t fully understand why problems happen and how to reason about them

This blog bridges that gap, we’ll go step-by-step:

- Define each concept clearly

- Explain why it exists

- Show when it becomes relevant

- Connect it to real-world systems

What is Concurrency?

Definition

Concurrency is the ability of a system to handle multiple tasks by making progress on them during overlapping time periods.

Why It Exists

In real backend systems:

- Most operations are I/O bound (DB calls, APIs)

- CPU spends significant time waiting

If a thread blocks during I/O, that CPU time is wasted unless we switch to another task.

When You Actually Need It

You should think about concurrency when:

- Your service handles multiple requests (web servers)

- Tasks involve waiting (DB/network calls)

- Throughput matters more than single-task latency

Key Insight

Concurrency is not about speed first, it’s about resource utilization and system responsiveness

Threads — Internal Execution Unit

Definition

A thread is the smallest unit of execution scheduled by the JVM and OS.

Internal Structure

Each thread operates with:

- Stack (private) → method calls, local variables

- Heap (shared) → objects, shared state

- Program Counter → execution pointer

Most concurrency issues originate from shared heap memory

Why Threads Are Expensive

- OS-managed resources

- Memory overhead (~1MB stack per thread)

- Context switching cost

This is why modern systems avoid creating threads manually

Race Condition

Definition

A race condition occurs when:

Multiple threads access and modify shared data concurrently, leading to inconsistent or incorrect results.

Why It Happens

Let’s break it technically:

- Shared Mutable State

- Multiple threads operate on the same variable/object

- Example: shared counter, cache, balance

- Non-Atomic Operations

- Operations like

count++are not single-step - Internally:

- Read value

- Modify value

- Write back

- Operations like

- Thread Interleaving

- JVM scheduler switches execution unpredictably

- No guarantee of execution order

These three together create inconsistency

When It Occurs in Real Systems

- Incrementing metrics (request counters)

- Updating financial balances

- Modifying shared collections

- Caching layers

Problem Example

int count = 0;

public void increment() {

count++; // not thread-safe

}How to Fix

1. synchronized (Mutual Exclusion)

Ensures only one thread enters the critical section

synchronized(this) {

count++;

}✔ Guarantees correctness

❌ Reduces parallelism due to blocking

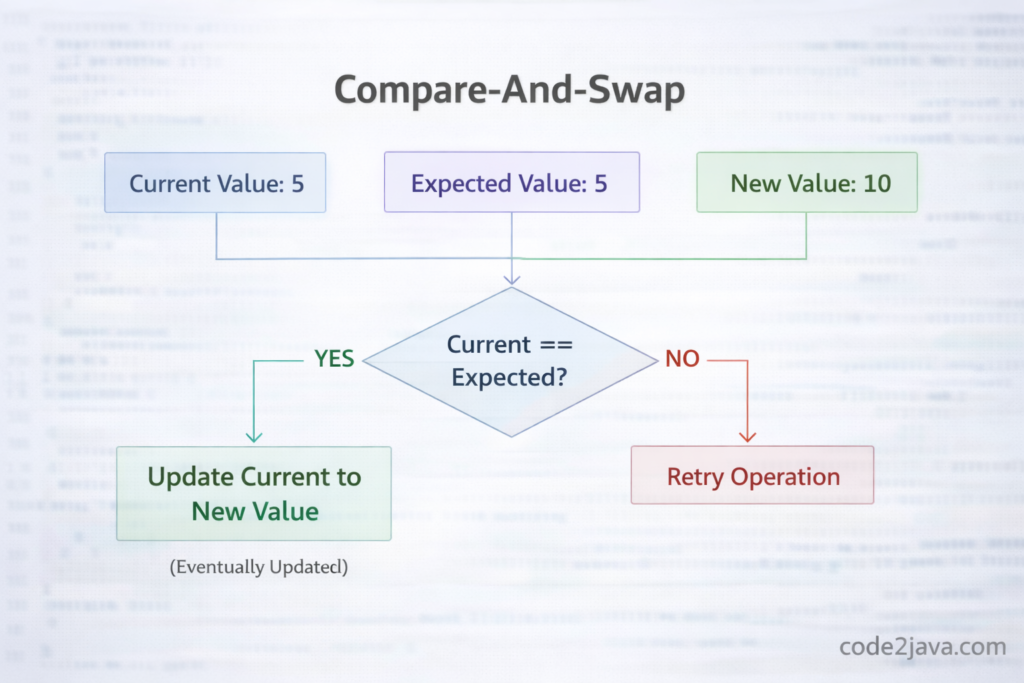

2. Atomic Variables (CAS-Based)

AtomicInteger count = new AtomicInteger();

count.incrementAndGet();Uses Compare-And-Swap (CAS) at hardware level

✔ Non-blocking

✔ Better performance under low contention

3. Design-Level Fix (Best)

Avoid shared mutable state

Examples:

- Stateless services

- Immutable objects

- Event-driven systems

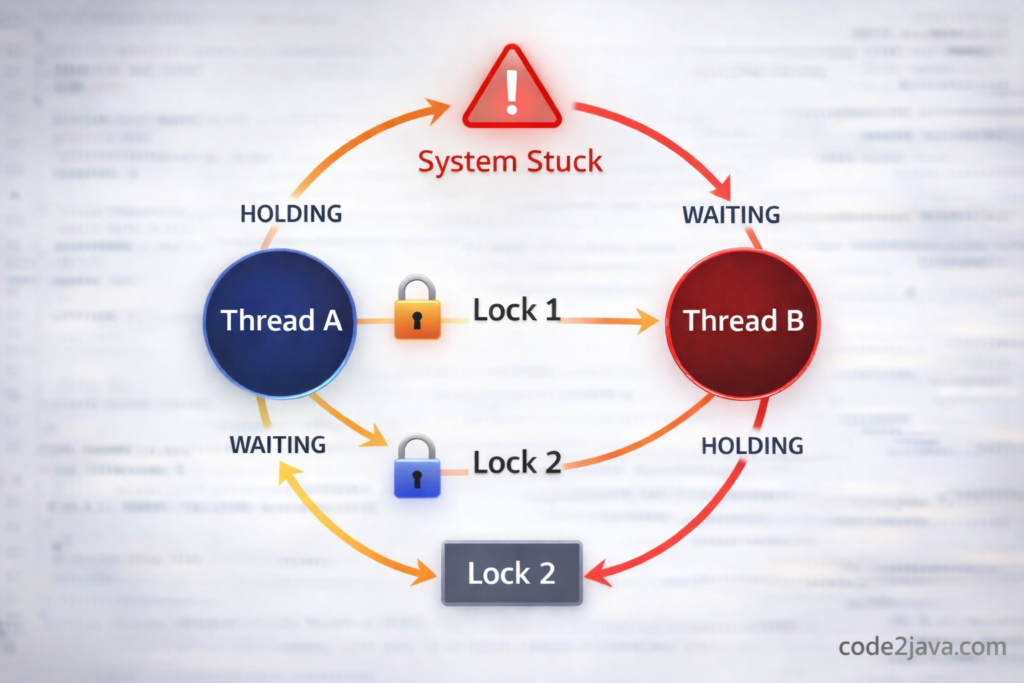

Deadlock

Definition

Deadlock is a situation where:

Two or more threads are blocked forever, each waiting for resources held by others.

Why It Happens

Deadlock requires these conditions:

- Threads hold resources (locks)

- They request additional resources

- No forced release (no preemption)

- Circular dependency exists

All four together create a deadlock

When It Occurs

- Nested synchronized blocks

- Multiple lock acquisition

- Database transactions with locks

- Distributed systems (rare but critical)

Example

Thread A → holds Lock1 → waits Lock2

Thread B → holds Lock2 → waits Lock1

How to Fix

1. Lock Ordering

- Always acquire locks in the same sequence

2. Timeout-Based Locking

- Avoid waiting indefinitely

3. Reduce Lock Scope

- Keep critical sections minimal

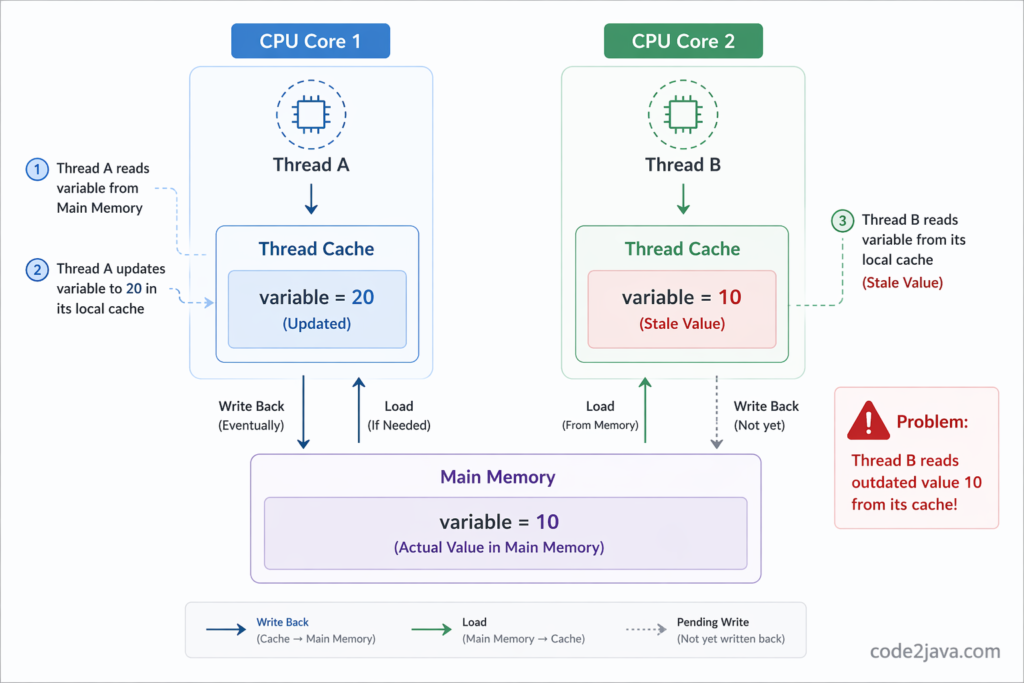

Java Memory Model (JMM)

Definition

The Java Memory Model defines:

How threads read/write memory and guarantees visibility and ordering of operations.

Why It Exists

Modern CPUs optimize aggressively:

- Cache variables in registers

- Reorder instructions for performance

Without JMM, multi-threaded behavior becomes unpredictable

Real Issue It Solves

Thread A updates variable → Thread B may NOT see updated value

This is a visibility problem

Happens-Before

A rule that ensures:

If A happens-before B, then B sees A’s changes

Examples:

- Lock release → next lock acquire

- Volatile write → read

- Thread start/join

“volatile” Keyword

Definition

volatile ensures that:

A variable’s latest value is always visible to all threads.

Why It Exist

To solve visibility issues caused by CPU caching

💡 When to Use

- Configuration flags

- Shutdown signals

- State indicators

What It Does NOT Solve

volatile int count;

count++; // still unsafe👉 Because: Operation is still non-atomic

synchronized

Definition

Provides mutual exclusion and memory visibility guarantees.

Why It Exist

To solve:

- Race conditions

- Memory visibility issues

When to Use

- Shared mutable data

- Critical sections

Trade-offs

- Blocking

- Reduced scalability under contention

Real World Problems

Most developers reach a point where they use concurrency but don’t fully understand:

- Why different APIs exist

- What problem each abstraction solves

- When to choose one over another

In this section, we’ll go deeper into the most important real-world tools:

- ReentrantLock

- Executor Framework

- Future & CompletableFuture

- Concurrent Collections & Atomic Variables

ReentrantLock — Beyond synchronized

What is ReentrantLock?

ReentrantLock is a more advanced and flexible locking mechanism than synchronized. It provides explicit control over locking behavior.

What Problem Does It Solve?

synchronized is simple, but limited:

- No timeout support

- Cannot interrupt a waiting thread

- No fairness control

- Lock is automatically released (less control)

In complex systems, these limitations become bottlenecks.

Why It’s Called “Reentrant”

Reentrant means:

The same thread can acquire the same lock multiple times without blocking itself.

Example:

lock.lock();

lock.lock(); // allowedInternally, it keeps a counter.

When Should You Use It?

Use ReentrantLock when:

- You need timeout-based locking

- You want to avoid deadlocks using tryLock()

- You need interruptible locks

- You want fairness (first-come-first-serve)

Example

ReentrantLock lock = new ReentrantLock();

if (lock.tryLock()) {

try {

// critical logic

} finally {

lock.unlock();

}

}

Trade-offs

✔ More control

✔ Better in complex systems

❌ More code

❌ Easy to misuse (must unlock manually)

Executor Framework — Managing Threads at Scale

What is Executor Framework?

Executor Framework is a high-level API that manages:

- Thread creation

- Task execution

- Resource utilization

What Problem Does It Solve?

Creating threads manually:

new Thread(() -> task()).start();

Problems:

- Expensive creation

- No reuse

- Hard to manage lifecycle

- No control over number of threads

Leads to performance issues in real systems

How Executor Solves It

Executor introduces:

Thread Pool

Instead of creating new threads:

- Reuse existing threads

- Queue tasks

- Control concurrency

Internal Flow

Task → Queue → Thread Pool → Execution → Result

When to Use

- Web servers

- Microservices

- Background job processing

- Batch systems

Basically everywhere in production

Example

ExecutorService executor = Executors.newFixedThreadPool(5);

executor.submit(() -> {

processRequest();

});

Important Design Insight

Thread pool size must be chosen carefully:

- CPU-bound → fewer threads

- I/O-bound → more threads

Future — Representing Async Result

What is Future?

Future represents:

A result of a computation that will be available in the future.

What Problem Does It Solve?

Without Future:

- You run a task

- You block until it finishes

No flexibility

With Future

You can:

- Submit task

- Continue doing other work

- Get result later

Example

Future<String> future = executor.submit(() -> "Hello");

String result = future.get(); // blocks

Limitation of Future

get()blocks- No chaining

- No easy error handling

- No composition

This is why CompletableFuture was introduced

CompletableFuture — Async Programming Done Right

What is CompletableFuture?

A powerful API for:

Non-blocking, asynchronous programming with chaining and composition.

What Problem Does It Solve?

Problems with Future:

- Blocking calls

- No chaining

- Callback hell

How CompletableFuture Helps

It allows:

- Async execution

- Transform results

- Combine multiple tasks

- Handle errors

Example

CompletableFuture.supplyAsync(() -> fetchData())

.thenApply(data -> process(data))

.thenAccept(result -> save(result));Key Concepts

- thenApply → transform result

- thenCompose → chain async tasks

- thenCombine → merge results

When to Use

- Parallel API calls

- Microservices aggregation

- Event-driven systems

Trade-offs

✔ Highly scalable

✔ Non-blocking

❌ Harder to debug

❌ Complex chains

Concurrent Collections — Thread-Safe Data Structures

What Are Concurrent Collections?

These are collections designed to work safely with multiple threads.

Examples:

- ConcurrentHashMap

- CopyOnWriteArrayList

- BlockingQueue

What Problem Do They Solve?

Normal collections like:

HashMap

ArrayListAre NOT thread-safe

Problems:

- Data corruption

- ConcurrentModificationException

- Inconsistent reads

How Concurrent Collections Work

Instead of locking everything:

They use smarter techniques:

- Fine-grained locking

- Lock-free algorithms

- CAS operations

Example: ConcurrentHashMap

Why It’s Efficient

- Doesn’t lock entire map

- Locks only small parts (buckets)

- Allows concurrent reads

When to Use

- Shared caches

- Multi-threaded processing

- High read/write systems

Atomic Variables — Lock-Free Concurrency

What Are Atomic Variables?

Classes like:

- AtomicInteger

- AtomicLong

- AtomicReference

They provide:

Thread-safe operations without using locks

What Problem Do They Solve?

Using synchronized:

- Causes blocking

- Reduces performance

How Atomic Works

They use:

CAS (Compare-And-Swap)

Flow:

- Read value

- Compare with expected

- Update if unchanged

Why This Is Powerful

- No locking

- High performance

- Scales better

Limitations

- Complex logic becomes hard

- ABA problem

When to Use

- Counters

- Metrics

- Simple shared variables

Key Takeaway (Very Important)

These tools exist because each solves a specific problem:

| Problem | Solution |

|---|---|

| Shared data inconsistency | synchronized / locks |

| Need flexibility | ReentrantLock |

| Thread management | Executor |

| Async result handling | Future |

| Async workflows | CompletableFuture |

| Shared data structures | Concurrent Collections |

| High-performance counters | Atomic Variables |

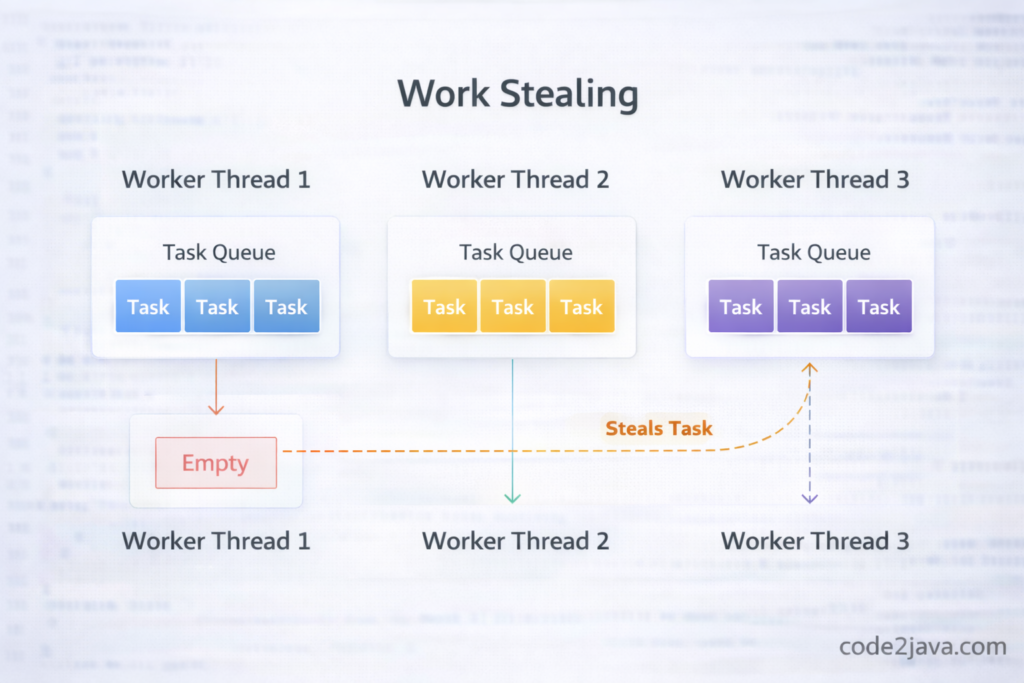

Parallelism Tools

Fork/Join

- Breaks tasks recursively

- Uses work-stealing

Parallel Streams

- Uses ForkJoinPool internally

- Best for CPU-bound tasks

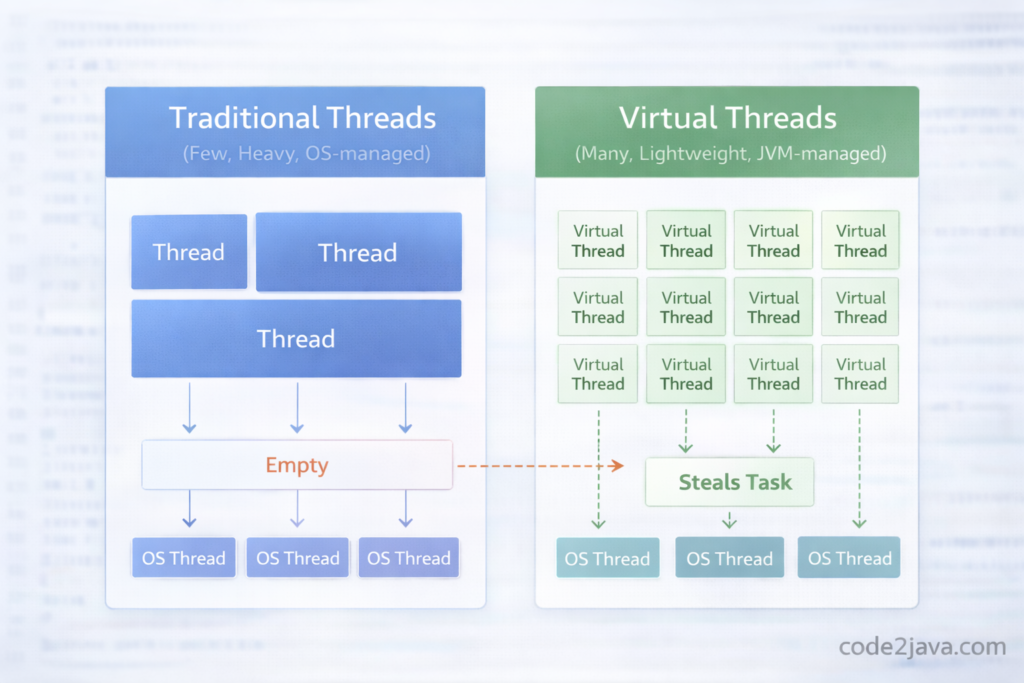

Virtual Threads (Modern Java)

Definition

Lightweight threads managed by JVM (Project Loom).

Why They Exist

Traditional threads:

- Heavyweight

- Limited scalability

When to Use

- High concurrency APIs

- I/O-heavy workloads

Designing Concurrent Systems

Preferred Approach

- Immutability

- Stateless design

- High-level abstractions

- Locks (last resort)

Key Insight

Good concurrency design avoids problems instead of fixing them later

Debugging Multithreading

Tools

- jstack

- Thread dumps

- VisualVM

Common Issues

- Deadlocks

- Thread leaks

- High CPU usage

Summary

Concurrency is about:

- Managing shared state safely

- Understanding execution behavior

- Making trade-offs consciously

If you understand:

- Why problems happen

- When they occur

- How to fix them

You’re already ahead of most developers.